Introduction

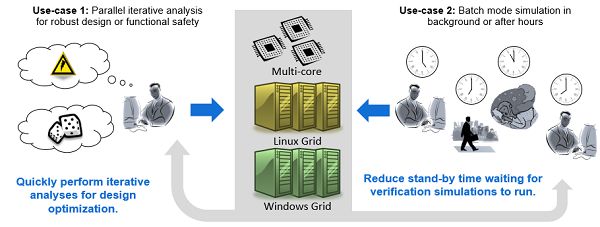

Saber Runtime parallelizes and distributes iterative simulations across computing resources to dramatically improve throughput and reduce simulation time.

The Saber Runtime library works with SaberRD, Saber Functional Safety Add-on, and Saber Inspecs Add-on to minimize the time spent running valuable performance and safety simulations. With Saber Runtime, engineering and safety teams can simulate thousands of scenarios that would otherwise have been too costly to perform.

SABER Runtime module Feature Highlights

-

Boosting Simulation Throughput

Robust design methodologies require advanced sensitivity and statistical analyses to verify the reliability of complex power networks. These analyses are recursive simulations requiring hundreds or thousands of runs which is impractical to support on a single CPU. The Saber environment solves this problem by distributing iterated simulations across a compute grid allowing multiple CPUs to perform the analyses in much less time. When a simulation is complete, results are gathered into a single data file for easy processing.

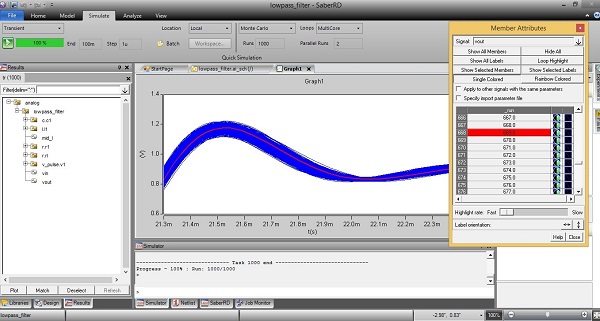

When you want to run a simulation with Runtime add-on you have to fill in the Loops, Parallel Runs and the number of Runs.

-

Link between Saber Run time and advanced analyses

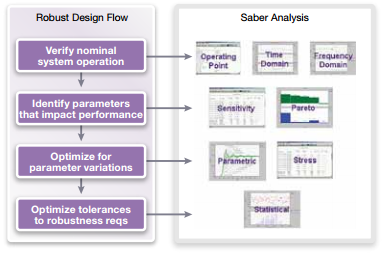

Saber’s advanced analyses (sensitivity, parametric, statistical, stress) give design teams the tools needed to implement robust design techniques. With these analyses, design teams can identify the parameters that most affect system performance, establish tolerances for the most critical parameters, investigate how performance is affected by random changes in tolerances, and analyze the operational stresses placed on system components.

As these kind of simulation can last until days or hours, the use of Run time module can divide the time by the number of computer cores.

A detailed statistical analysis is the foundation of a robust design methodology. Tolerances and statistical distributions are assigned to the parameters that most affect subsystem performance.

Once the statistical setup is complete, the number of simulation runs required to produce statistically meaningful results must be determined. The number of runs depends on the complexity and characteristics of the design. Simulation runs on the order of several hundred to a few thousand are typically required.

-

Distributed Computing

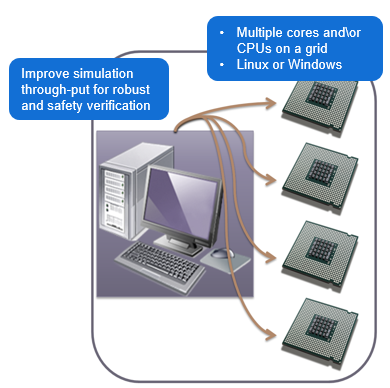

In a distributed computing environment, compute intensive loads that have traditionally been executed on a single computer can be spread across a grid of multiple CPUs. This is key for performing statistical analyses on complex automotive subsystems.

By its very nature, a statistical analysis is a series of individual simulation runs. Each run differs from the previous only by a change in parameter values. This is analogous to building prototypes using parts with different values. On a single CPU, these individual runs are executed in series. In a distributed computing environment, individual runs are spread across the compute grid and are executed in parallel. This parallel processing capability significantly improves simulation performance and is limited only by the number of computers on the grid.

Traffic on the grid is controlled by a grid manager program, which manages the grid resources and monitors grid traffic to know when to submit additional simulation tasks. Once a CPU on the grid is assigned a simulation task, the CPU is “locked” while the simulation runs. When the simulation is complete, results are assembled into a common data file, the compute resource is released, and the grid manager repeats the process until the overall statistical analysis is complete.

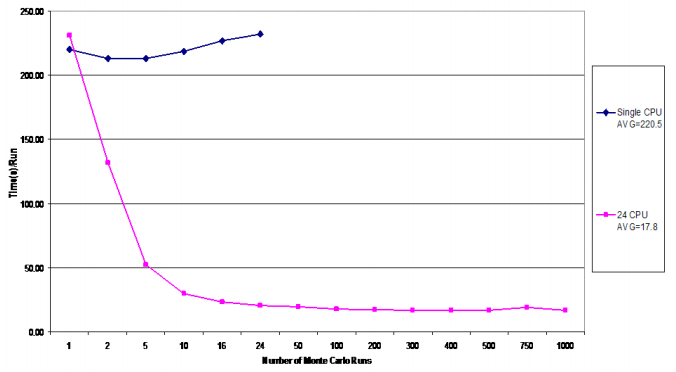

Fig 4: Single CPU compute grid simulation performance for the statistical analysis cam phaser subsystem

As shown in the above figure, the average execution time for a single run of the statistical analysis is 220.5 seconds on a single CPU, and 17.8 seconds for a grid of 24 CPUs. Based on these averages, a single CPU running 24 hours a day would require just over 2.5 (2.55) days to complete the 1000 run Monte Carlo analysis. The compute grid is able to finish the same 1000 run analysis in just under 5 (4.94) hours. The compute grid, therefore, is able to complete the analysis over 12 times faster than with a single CPU. This represents a significant decrease in the time it takes to complete the subsystem design. The result is a considerable savings in development resources and a faster time to market.

In conclusion

Runtime add-on module allows to improve throughput and reduce time with distributed computing

Parallelize 1000s of simulations using Saber Runtime (for Robust Design and Functional Safety simulations) : use Saber Runtime to improve throughput on multi-core work stations or distribute functional safety simulation jobs across your company’s grid for maximum simulation efficiency.

The statistical analysis implements the virtual manufacturing environment while grid computing reduces the time required to gather complete simulation data.